Web performance” is often also referred to in its contracted form “webperf”. WPO stands for “Web Performance Optimization”. As the name suggests, its purpose is to estimate the level of web performance. Not very clear for a novice, is it? To be perfectly precise, we’re talking here about technical performance (loading speed), not commercial performance (conversions, rpm, etc.). The two are very different, but are sometimes confused.

Definition of web performance

If we had to define it, web performance would be “the technical discipline aimed at ensuring that web pages are displayed quickly and smoothly in browsers, during their loading and throughout their use”. This is the definition we’ve included in our webperf glossary (french), and it seems to us to be the most explicit and accessible for non-technical profiles.

What are the challenges of web performance?

Why web performance? A simple question at first glance, but one with many answers. Let’s take a look at the benefits of offering web users a fast site.

A better User Experience

Improving the speed of a site’s pages means ensuring thata maximum number of users can access them, whatever their access conditions (Internet connection, terminal power, web browser, etc.). For the majority of visitors, this means shorter waits and greater fluidity when consulting content pages or using online tools. The User Experience is thus much more qualitative on a website optimized for web performance.

Numerous studies and case studies have demonstrated the positive impact of performance optimization on indicators such as conversion rate, bounce rate and sales. Amazon, a pioneer in this field, estimates, for example, that every 100-millisecond improvement in loading time results in a 1% increase in revenue. Other cases highlighted by Yelp, Mozilla and Walmart show even more spectacular gains. And that’s without mentioning the impact of increased customer loyalty, which other reports have also highlighted.

In the specific context of AdWords (or other) campaigns, landing page optimization can have a significant impact on ROI. This can significantly reduce the cost of acquiring new customers and prospects.

Improving visibility in Google

The second reason why web performance is so popular has to do with its greatest ambassador: Google. To reduce the cost of indexing pages, the number 1 search engine encourages site publishers to improve page loading times. The crawl phase, which is essential to update the results pages for the millions of queries received daily, is thus less impactful for the American company, whose data centers have a major impact on electricity bills.

From Google’s point of view, faster sites also mean faster display of advertising space. Google’s core business remains online advertising, via its leading Google Ads platform. These are the 2 reasons why Google is urging site publishers to improve their loading times via its Search Console tool. The “On-Page Experience” section includes an “Core Web Vitals” section, which has grown enormously over the past 5 years.

Web performance is now officially an SEO ranking criterion, even if its weight in ranking algorithms is obviously less than that of traditional factors such as netlinking authority and content quality.

Reduce hosting costs

By reducing the number of http requests, the weight of static resources and the need for costly server-side calculations (execution of php scripts, SQL queries, etc.), web performance optimization can significantly reduce server load. This translates into increased speed, particularly during peak loads and periods of high demand (such as a TV show). If the delta is substantial, this can also be an opportunity to downgrade the server configuration to less powerful hardware on the processor and RAM side.

For a site with heavy daily traffic hosted on a dedicated server or a large VPS, the difference in hosting costs can be really interesting, and in itself justify a web performance optimization project.

Saving the planet from global warming?

As web performance specialists, we really want to believe it (fench). A site whose loading times have been optimized is bound to be lighter and less resource-hungry than an equivalent conventional site (on both the hosting and user sides). It will therefore generate fewer greenhouse gas emissions, ensuring a lower carbon footprint. However, we know that in the digital sector in the broadest sense, the web is only responsible for 1 to 2% of global emissions.

While we can contribute to making the web a better place, it’s not our work as experts that will save the planet from irreparable global warming.

How do you measure web performance?

As in any technical field, it ‘s essential to have performance indicators at your disposal, so you can compare several sites with each other, or compare changes over time. As in the automotive world, where a car’s performance can be measured in terms of torque, horsepower, top speed or time to reach 0-100 km/h, there are dozens of ways of calculating a web page’s web performance.

What are the most relevant indicators?

TTFB: server response time

Some indicators have been used for as long as we’ve been talking about webperf. Such is the case of TTFB (acronym for Time to First Byte), which measures the time required to make a complete round trip between the browser and the server hosting the site being visited. Simple to calculate, it’s ideal for addressing web performance issues related to hosting (data center too far away, incorrect processor or RAM sizing, lack of cache system, poorly configured SQL databases, etc.).

Speed Index: perceived speed

The other metric that has retained all its meaning over the years is the Speed Index, which measures the time it takes for the visible area of the page (often referred to as the viewport) to load in its entirety. This requires video capture, which is both complex and costly to set up. This is why not all tools offer it, but also why the indicator can sometimes vary considerably depending on the measurement tool used.

What are Core Web Vitals?

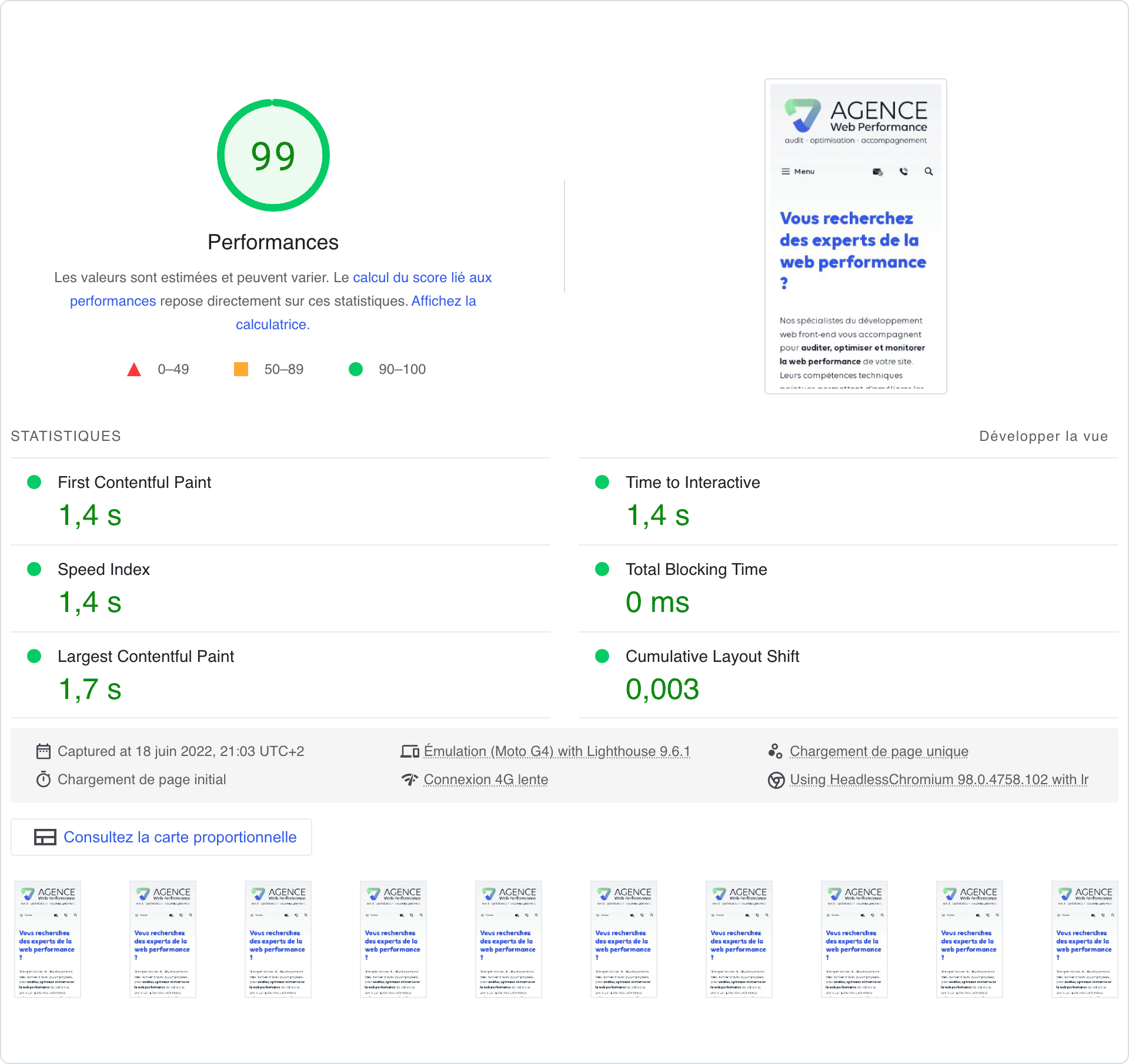

In 2020, Google introduced a set of metrics called “Web Vitals”, including 3 “Core Web Vitals” which have since become the standard. Each aims to estimate the level of performance of a web page from a specific angle. Between them, they are supposed to offer a comprehensive view of the performance level of a page, and even of a site when calculating averages as Google does with its origin reports. Here are the 3 indicators:

LCP

An acronym for “Largest Contentful Paint”, this performance indicator measures the time it takes for the page’s main visible element to be displayed. This is often an image, but also titles or blocks of text. A good LCP should be less than 2.5 seconds, and should never exceed 4 seconds.

FID

An acronym for “First Input Delay”, this performance indicator measures the time needed for a page to be fluidly interactive. It is heavily impacted by JavaScript, and in particular by third-party scripts. To be considered good, it must be less than 100 milliseconds. Above 300 milliseconds, it is considered poor.

CLS

An acronym for “Cumulative Layout Shift”, this performance indicator measures a page’s ability to offer an interface whose elements remain stable over time. No visual shift, either vertical or horizontal, should occur during resource loading. It is measured without units, with thresholds of 0.1 to be good and 0.25 to be bad.

The 3 other metrics in the “Web Vitals” section are FCP (First Contentful Paint), which measures the time it takes for the first visible element of the page to be displayed, TTI (Time to Interactive), which measures the time it takes for a page to become interactive for the user, and the Speed Index, already presented above.

What tools are used to measure web performance?

There are 2 main families of tools used to assess the performance of a page or site: synthetic testing tools, which provide access to “laboratory” data, and Real User Monitoring (RUM) tools, which provide access to field data, i.e. data observed by real users during their browsing sessions on the site in question. They operate in very different ways, each with its own advantages and disadvantages.

Synthetic measurement tools

There are dozens of tools available for calculating web performance. Some are free, others are paid for, but the majority are available in both free and paid versions, with restrictions on functionality and/or test volumes. All offer at least some information on traditional metrics and Web Vitals. What sets them apart is their ability to provide relevant recommendations based on a more or less qualitative and up-to-date repository. Here is a selection of the most popular, bearing in mind that there are others just as relevant.

PageSpeed Insights

This is the most popular tool, mistakenly considered by many to be what Googletakes into account in its algorithm. Very simple to use, it provides fairly basic recommendations for improving the average score, calculated on the basis of 100.

Lighthouse

Another essential tool in the Google ecosystem, Lighthouse can be accessed directly via the Chrome browser development tools, thanks to an extension. Recommendations are the same as for Page Speed Insights, with additional sections dedicated to accessibility, SEO and applications.

GTmetrix

An independent, pay-per-use tool, GTmetrix offers more advanced analysis functionalities than Google’s tools. It can also be used to monitor sites at regular intervals, tracking optimization work and detecting any regression.

WebPageTest

Webpagetest is an advanced, technical tool ideal for understanding site performance issues. It is particularly appreciated for its detailed visualization of the loading waterfall, and for its many features in the paid version.

Real User Monitoring tools

SaaS tools of the RUM type are less numerous and less popular, and for good reason: they require deployment before they can provide data, and their operation involves significant costs which are felt in the selling price of existing packages. But Google, once again, is collecting, consolidating and sharing free of charge the most important database in the field of web performance: the Chrome User Experience Report, or CrUX. Its French translation, Rapport d’expérience utilisateur Chrome, is rarely used.

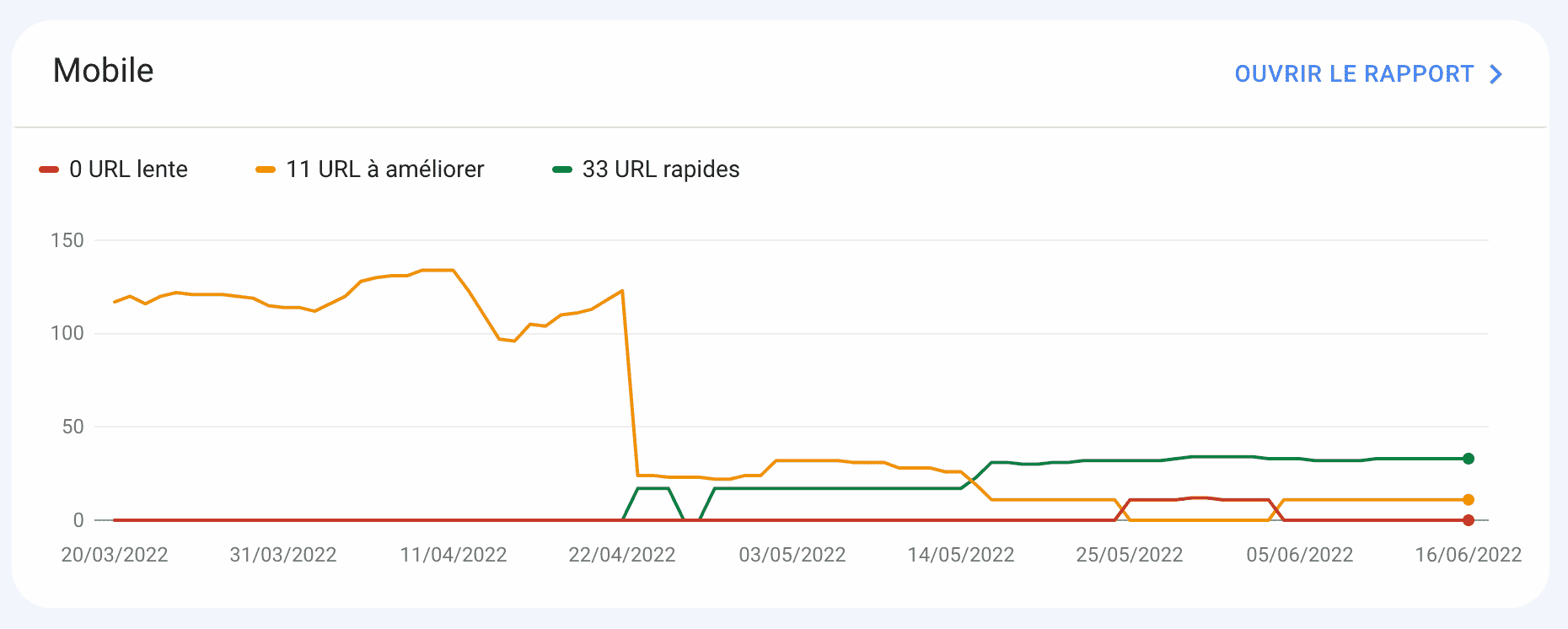

What is CrUX?

The CrUX aggregates data collected from users of the Google Chrome and Microsoft Edge browsers logged into a Google account. This is done automatically and transparently, without any action on the part of users or site editors. The result is a veritable goldmine that Google uses both in its tools for publishers (Search Console, Lighthouse, Page Speed Insights) and in its ranking algorithms.

CrUX data are thus literally your web performance scores in Google’s eyes. This is a first: since the demise of Pagerank, Google has never before shared data used as such within its ranking algorithms. While its impact remains negligible for most queries, it remains one of the few levers that can be improved by visualizing the impact on Google’s side.

What other RUM tools are there for webperf?

If you’d like to collect information from your actual users without limiting yourself to the browsers supported by CrUX, there are several solutions: SpeedCurve, Raygun, Contentsquare or appYuser. Bear in mind that they will all be paying solutions, with packages or contracts based on monthly or annual page view volume. On large e-commerce sites, this can quickly add up to a substantial budget. Solutions backed up by Application Platform Management (APM) can help reduce costs as part of a comprehensive monitoring strategy (frontend and backend).

How to improve web performance?

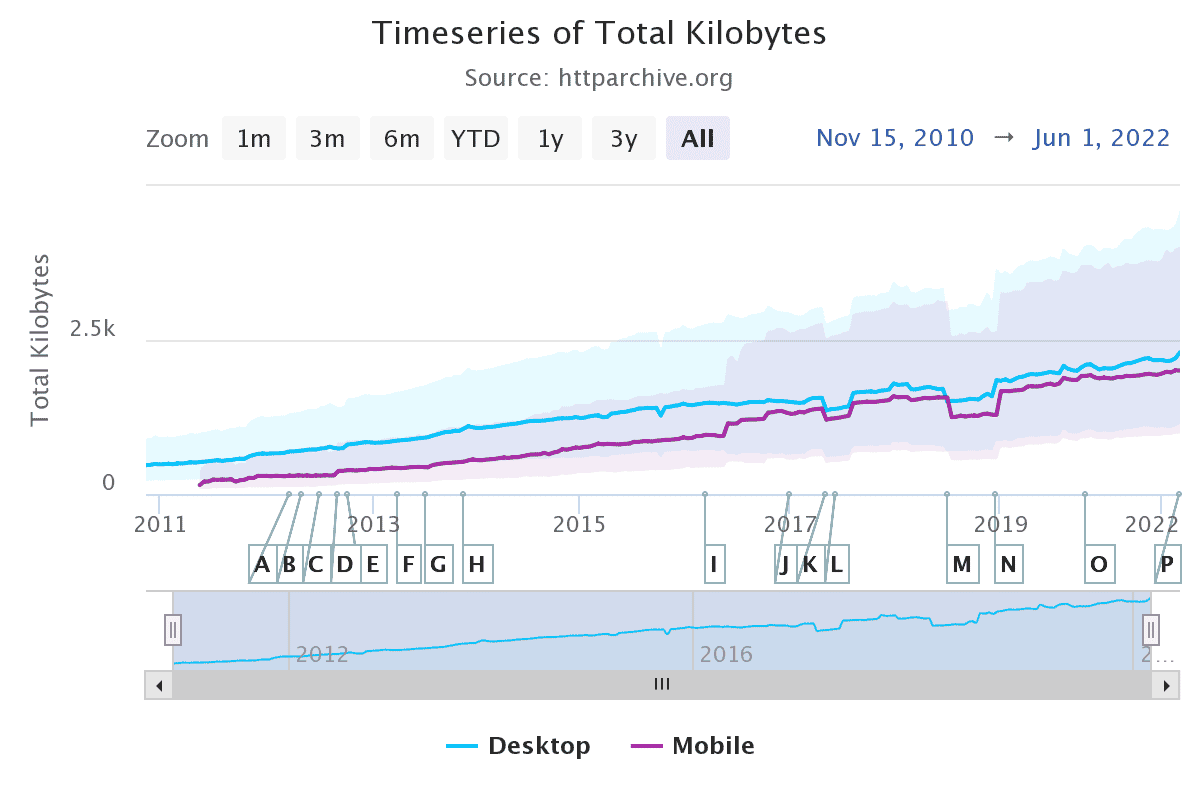

Web performance is a technical discipline that needs to be taken into account very early on in the site creation process, in the same way as accessibility, SEO and security. To be sure of having a site that loads quickly, it’s essential toaddress the issue as early as the specifications stage. Technical choices in terms of stack (framework, SSR solution, server architecture…) and specific developments can then be adapted to meet the objectives set in terms of average page weight or any other performance indicator (FCP, Speed Index, Blocking Time…).

Turn to a web performance specialist

Of course, this approach is still rare, and most websites continue to go into production without the slightest focus on web performance, even those of leading web agencies. Optimizing web performance to meet late-stage objectives is often a challenge, especially for development teams who often lack expertise in the field.

The solution is to turn to specialists for a performance audit, and if possible, performance optimization. We offer this type of service, with consistently attractive gains thanks to tools and methodologies developed over years of experience. The breakdown of optimizations is faithful to Pareto’s Law, with 80% classic optimizations and 20% more specific, resulting from technical choices specific to each customer. It’s in this latter segment that the expert eye brings maximum added value, enabling us to achieve sometimes ambitious goals.

Up-skilling your teams or your service provider

The other solution is to tackle the problem at its source: front-end developers. Whether this involves watching free videos, reading articles and reference manuals such as Web.dev, or taking part in specialized training courses, the idea is to lay the foundations for them to acquire the right reflexes and habits. All of this needs to be complemented by regular monitoring, as the technical environment is constantly evolving: CSS, html and JavaScript standards, web browsers, frameworks, tools, SaaS solutions, and so on.

This is the best way to create a performance culture within the technical teams, but also across the business, marketing and UX/UI teams. All these profiles have a role to play in improving page speed, whether through their development, choice of third-party tools or interface and tool design.

What can we say in conclusion?

As you’ll have gathered from reading this article, web performance is a subject as vast as it is complex. KPIs, tools and methodologies exist, but are generally poorly understood and mastered. Even front-end developers, who use the same languages and tools as web performance experts on a daily basis, are still too often unaware of the impact of particular choices on page load times or interactivity. These are the people who, day after day, through their freelance work or web agency, contribute to creating the web as we know it, with the aberrations we have to deal with.

Just as SEO has seen the birth of SEO agencies, we’ve taken up the challenge of creating the first agency specializing in web performance. If you’re looking to carry out projects in this field, don ‘t hesitate to call on us: our cutting-edge expertise is ideal for prioritizing an optimization roadmap, making architecture and tool choices, or helping your teams to upgrade their skills. We’re here to help you build a faster web that meets the expectations of your customers and visitors.